Blog / Guide

Write to UsProxies for Web Scraping: How They Work and How to Choose the Right Type

April 29, 2026

Guide

Web scraping is essential for competitor monitoring, rank tracking, product intelligence, and large dataset collection. At scale, scraping without proxies quickly fails due to rate limits and blocks.

Modern proxy networks provide automatic IP rotation so scraping traffic looks more natural and workflows remain stable.

What Is a Proxy in Web Scraping?

A proxy server is an intermediary between your scraper and the target site. Requests are routed through proxy IPs instead of your own IP.

At high request volumes, a single IP is easy to detect. Proxies distribute traffic across many addresses, reducing detection risk.

Why Proxies Are Essential for Scraping

Without proxies, scraping projects usually face:

- IP bans or IP blocks

- Rate limiting and slowdowns

- Geo restrictions

- CAPTCHA interruptions

Proxy networks enable automatic rotation, location-specific access, and higher long-run success rates.

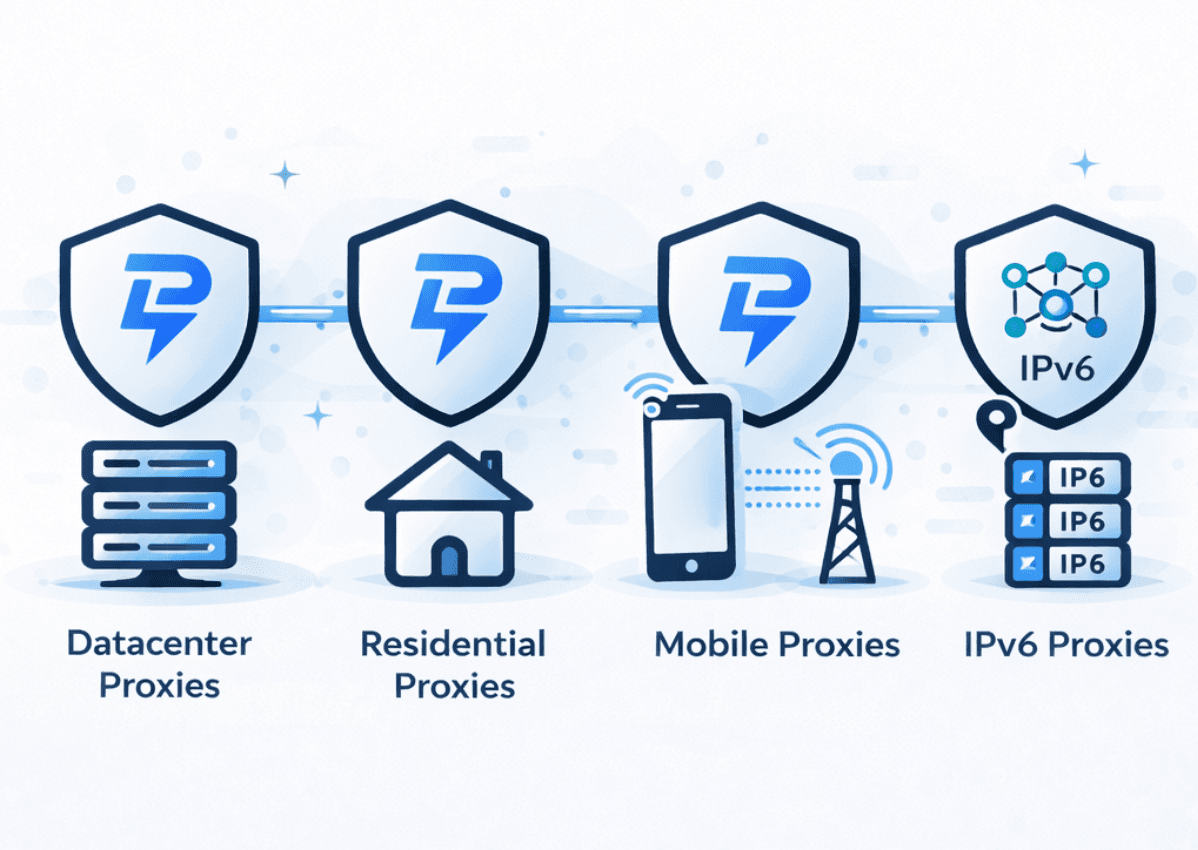

Types of Proxies Used for Web Scraping

Residential Proxies

Residential proxies are highly trusted and ideal for protected platforms.

Datacenter Proxies

Datacenter proxies are faster and more cost-efficient for bulk scraping.

ISP and IPv6 Proxies

ISP proxies offer session stability; IPv6 proxies support very large pools where compatible.

Mobile Proxies

Mobile proxies can bypass stricter anti-bot defenses with carrier IP behavior.

Proxy Providers and Services

Strong providers offer large fresh pools, automatic rotation, uptime reliability, and support. Evaluate proxy quality, geographic coverage, and dashboard tooling.

Free vs Paid Proxies

Free proxies are usually unreliable and frequently blocked. Paid proxies provide better speed, stability, security, and support for serious scraping workloads.

Accessing Protected Sites

Protected websites use CAPTCHAs, aggressive throttling, and bot fingerprints. Combining rotation, session handling, and trusted proxy types improves access reliability.

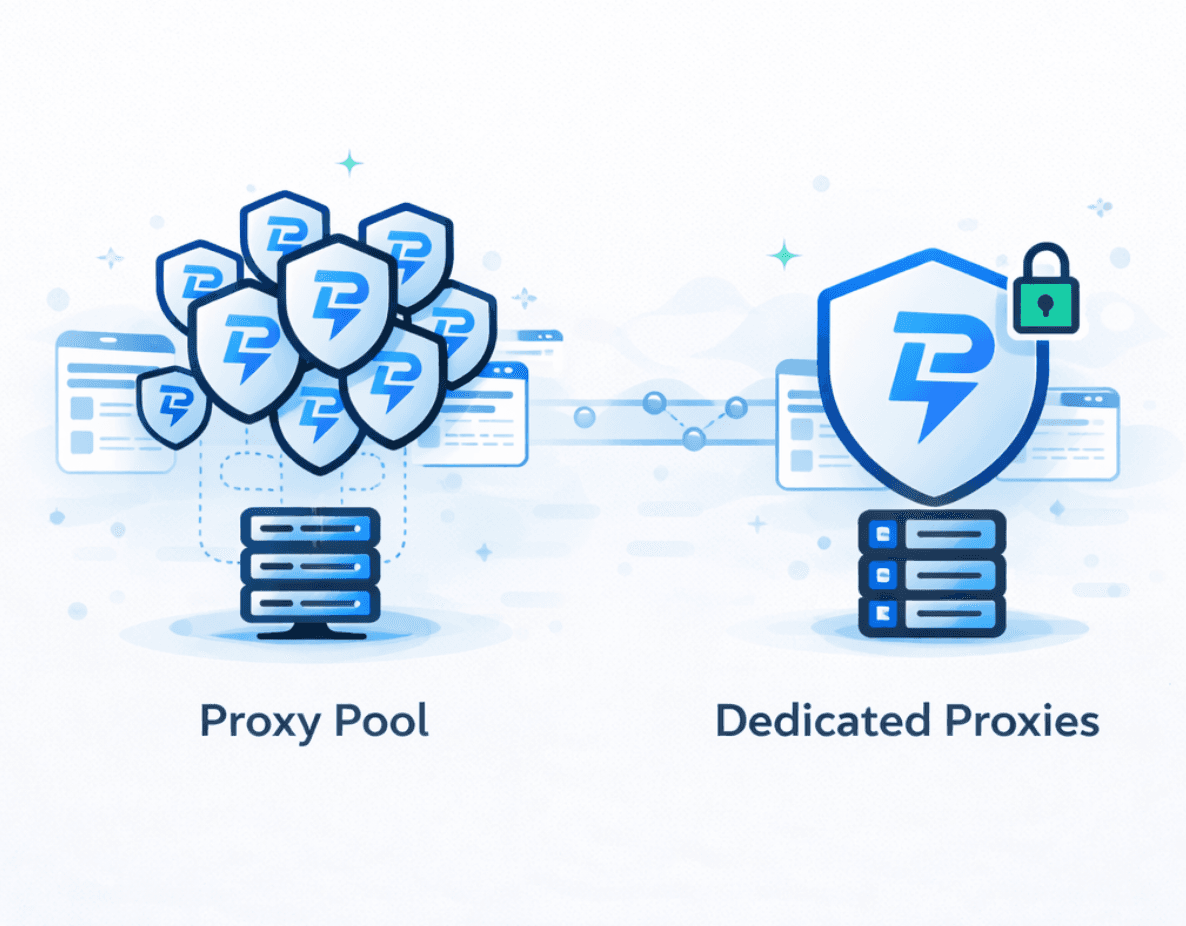

IP Rotation Strategies

Sticky sessions keep one IP for continuity. Rotating proxies change IPs per request or interval. Effective setups balance request volume and rotation frequency.

How to Choose the Right Proxy for Your Scraping Project

- Target website detection sensitivity

- Required volume and speed

- Geo-targeting needs

- Budget limits

- Session persistence requirements

Match proxy type to task rather than using one configuration for every target.

Best Practices for Web Scraping with Proxies

- Limit request frequency

- Rotate headers and user agents

- Use proper session management

- Monitor response/error rates

- Avoid restricted or private data

- Stay compliant with applicable laws and policies

Final Thoughts

Web scraping becomes sustainable when proxy type, rotation strategy, and provider quality are aligned to the project. Proxies turn fragile scripts into durable data pipelines.

Implementation Notes

For additional reading, see Proxies for Scraping.

FAQ

Why are proxies necessary for web scraping?

Websites block repeated requests from one IP. Proxies distribute traffic across multiple addresses, reducing bans and improving scraping stability.

What type of proxy is best for web scraping?

How do rotating proxies work in scraping?

Can web scraping be done without proxies?

Are residential proxies better than datacenter proxies for scraping?

Is web scraping legal when using proxies?

February 18, 2026

Guide

Proxies for Scraping: How They Work and How to Choose the Right Type

Compare proxy types for scraping and learn how to design reliable request distribution for high-volume data extraction.

April 29, 2026

Guide

Backconnect Proxies: What They Are and Why Businesses Use Them

Understand rotating backconnect infrastructure, proxy pools, and when dynamic IP routing outperforms static setups.

February 18, 2026

Guide

What Is an HTTP Proxy?

Learn how HTTP proxies handle web traffic and where they fit in scraping, automation, and large-scale request routing.